Claude is an information architecture laboratory (Spilling Ink #15)

"Tech has an unhealthy relationship with tools: we use them to define our goals." – Pavel Samsonov

Designing a product’s information architecture means designing a model for how all the pieces of the product fit together. The structural pieces of a product include the fields of information and the screens upon which they’re displayed. The structure also includes the relationships between these pieces. That is, the flows from one screen to the next, the entities or categories that fields belong to.

A model names the pieces – what you call the screens and the fields and the flows and the entities. We’re used to naming things: we name categories of content and metadata values and taxonomy terms. But product models operate at a higher level of abstraction than a content information architecture, which deals with topics and tags. Content information architecture plans the space in which the information lives. Instead, a product information architecture is a framework for designing the spaces within a product.

We’re building spaces for work to happen, where users insert, manipulate, update, and share information. Those spaces have a shape that influences the kinds of things users can do and those spaces have rules that govern how the information behaves once it’s in there. To meet the ever-increasing needs of users, those spaces are dynamic. And so we can’t simply design the spaces themselves – they become obsolete pretty quickly – unless we also design the framework for designing the spaces. These are the names and rules and relationships we give to the parts of an information system – within the context of our product – so that we may collaborate with our teams based on a shared understanding.

IA + AI

Like every other red-blooded information architect, I’ve been wondering what role artificial intelligence will play in my practice and process.

Recently, I’ve been using Claude to work through a model for one of my clients. Claude has allowed me to explore a potential information architecture for their complex product.

Here are a few ways I have used Claude to do this:

Produce sample data

The product’s demo doesn’t have enough sample data for my purposes, so I used Claude to generate some. Claude is very good at this. Having already specified the domain, I gave Claude the title of a report and it produced a list of fields the report might have.

This one, of all the steps I list here, makes me the most uneasy. As a consultant (and product of liberal arts education), I pride myself on getting up to speed on a new domain quickly. In this case, leaned on the robot to fill in that gap.

On the other hand, developing a model means producing a framework that can accommodate a wide range of use cases, and having data that is realistic, if not exactly right is useful for this.

Apply hypothesis model

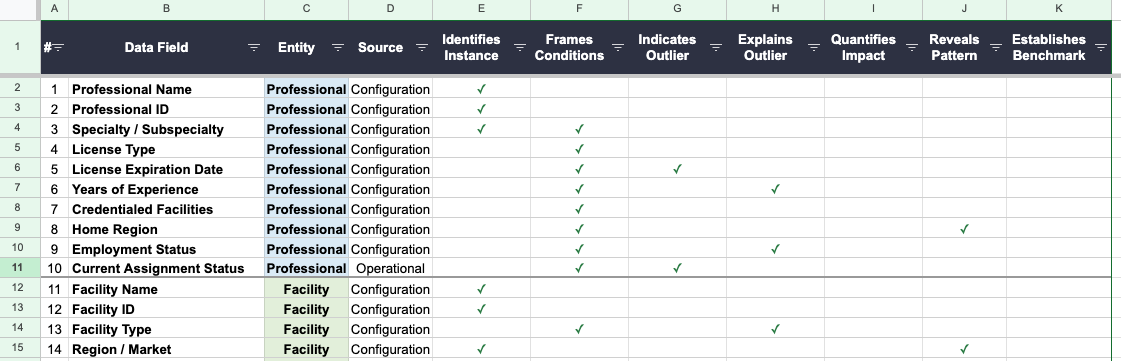

I had an idea on how to classify these data fields to support the team’s design efforts. My hypothesis model categorized data fields by their function relative to the main use case, including, “Identifies instance” and “Explains outlier.” I told Claude my hypothesis and asked it to take a swing at categorizing the fields. It produced a spreadsheet. With each of Claude’s attempts, I found opportunities to revise the model. Working with Claude in this way gave me a chance to spell out the hypothesis clearly so the robot could apply it to our sample data.

Stress-test the model

I gave Claude two other reports to do the same thing: generate candidate data fields and apply the model to them. It gave me a few dozen fields that might appear on each report, and it categorized the fields again using the hypothesis model, giving me even more inputs for revising the model. Stress-testing is something I do as part of my process normally, and Claude allowed me to do it faster.

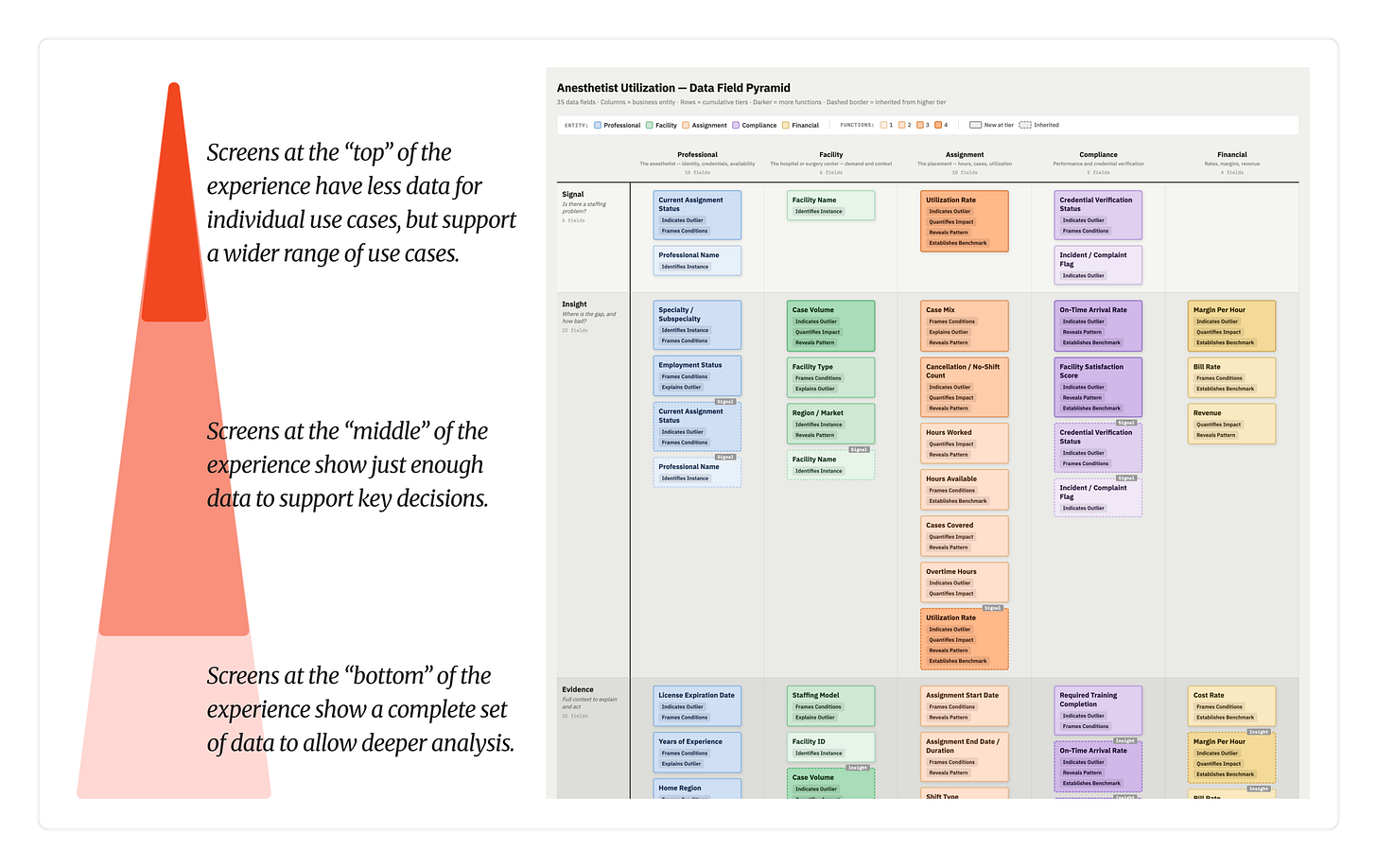

Elaborate the model

The first hypothesis I fed to Claude was just one way of classifying the data. The larger model I had in mind involved several ways of classifying the fields, including a way to prioritize them to indicate where users should see them along the flow. The variety of classifiers – function, role, priority, etc. – establishes a language for designers and product teams to use when discussing the structure of the experience for their specific feature.

I expressed this ultimate goal to Claude to embed the intention of the model in the process. My aim was not to determine the structure of the experience for this report at this time. Instead, I’m building a framework to help designers have conversations with product and engineering teams. That framework needs to be portable – working across different areas within the product – and meaningful – genuinely accessible to the people working with it.

Apply hypothesis model to sample data (again)

With an expanded model, I asked Claude to apply it to the data fields. I was working with three different sample reports, and I rotated which one I started with, but eventually I asked Claude to apply the model to all of them. Each time, this gave me a chance to see how the revised model broke in a different situation.

Feedback on the model, specifically what’s missing

Throughout this process I occasionally asked Claude what was missing from the model, what hadn’t been accounted for. This was a helpful gut check for me. None of these queries led to any meaningful changes in the model, but seeing the results from this prompt allowed me to build a narrative around the choices I’d made.

Visualize the model

Most of this process involved Claude producing spreadsheets with the data fields categorized, but I knew that it would only really have an impact if the team could visualize the fields. We went through seven or so iterations of the visualization, applying different visualization techniques to various aspects of the model. This rapid-fire iteration was eye-opening because I could focus on the way in which we were visualizing and not have to mess with the details myself. In providing visual direction to Claude, I also identified some assumptions I’d been making but hadn’t articulated.

Claude wasn’t great at suggestions here, as its graphic design choices didn’t highlight the meaningful distinctions.

~

The process with the client is ongoing, so we’ll see if my hypothesis model breaks when applied by humans. I’m fully expecting it to break, but I see that not as a flaw but as part of the design process. No model is perfect in its first iterations. What Claude allowed me to do is provide a level of detail about the model that would have been time-consuming and challenging on my own. This level of detail helped my stakeholders see what I was trying to do.

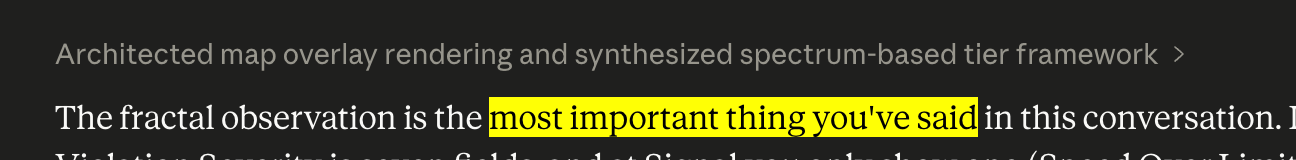

Crisis of confidence

Given how encouraging Claude is in its responses, I have to be careful. No other design tool has ever told me that an idea of mine was “the most important thing you’ve said in this conversation”. I can’t put much stock in these judgements. A client tells me that? Sure. Trusted colleague says that to me, I pay close attention. For Claude to make this claim is like my own consciousness telling me that I’m a genius. Let us not gaze at our reflections too closely.

In the last few days, I’ve started reconsidering part of the model. This is inevitable. As you start to become comfortable with a model, you begin to question the whole thing. Does this even matter? Is it even worthwhile? What purpose does this particular part serve?

I’ve heard people say that these Large Language Models can be thought partners. But Claude will indulge every detour, every reconsideration. A good thought partner (i.e. one that’s human) will challenge my ideas, creating friction in the thought process. Claude does not create friction, even if I tell it to. Claude will not truly help in a crisis of confidence. Instead, it will continue to hold up the funhouse mirror, making it look like maybe I’m onto something, when what I really need is the opposite.

In my other writing, I’ve been pretty cynical about AI. In reality, I’m fascinated and excited about the technology, but angry at the confluence of this technological marvel, the unchecked greed of the billionaire class, and the cowardice and apathy of US regulators. I don’t believe any good comes from ignoring the technology. Instead, it’s wise for us to understand it first hand, because otherwise all we have to go on is what we hear. And the hype machine is out of control.

Terrence Tao says about AI: “[Math] problems are like distant locations you would hike to… AI tools are like taking a helicopter to drop you off at the site. You miss all the benefits of the journey itself.”

Design is also a journey.

My approach here is not to replace the journey, not to short-circuit it, but to enhance it. Yes, I might have learned something from refining the graphic design myself, building the spreadsheets myself, rather than having Claude do it for me. That, however, would have required me zooming in and out constantly. Instead, I offloaded the mental load of the details of specific use cases so I could look across use cases to see how the model fared.

With Claude doing the heavy lifting on applying the model to the data, I could focus on whether the model was capable of doing what I wanted it to do. It is not a thought partner, but instead a laboratory, where I can explore and expand upon hypotheses, to take more robust models to my true thought partners, my colleagues and co-workers.

Can I help?

Spilling Ink is the (sorta) monthly newsletter from from Curious Squid, Dan Brown’s IA and UX design agency based in Washington, DC. We help clients large and small work through wicked problems. Have a product experience that needs untangling? Let’s talk!

Listen to Unchecked

Unchecked is the monthly podcast about disinformation and the role designers must play in creating more resilient information spaces.

I’m in the midst of an IA tangle (and using Claude to assist). Have you tried any skills to play the role of your intellectual foil?

Thanks for sharing this experience, Dan 👏

I left a note over on LinkedIn wondering if you had done anything to tune Claude's personality to make it more of the thinking partner you might want, at least temperamentally - I have found that helps (although perhaps it just helps me be less sceptical which may not be ideal!)

I have been doing some work building an app using Replit recently and observing the significant 'natural' shortcomings with regards to IA. I haven't specifically prompted it (yet) but it gives no consideration to what the overall navigational architectural experience will be like for a human user. It continues to just throw more things, in no perceivable order, into a navigation menu. Often with labels that are confusingly similar to something that is already there. And sometimes it just entirely forgets to put anything in any navigation that lets me find/get to the feature it has built.

Obviously this just exposes all the extra work I should be doing in advance to prompt it to consider these things (and even that would not be entirely successful I suspect)... I think it is interesting to see what it does and doesn't (can and can't) do, out of the box, without prompting.

I have no idea what kind of rubbish people are getting back after letting their agents autonomously build everything while they sleep! Or perhaps everyone is already just writing much more sophisticated IA prompting than I am?

Things I think about. Thanks for the inspiration.